It has been a few weeks since the marketing machine we all know as Apple announced their plans for the TV. Given that space of time, I think that people can now look at the announcement a little bit more objectively and try to make true judgement calls on it. Everyone is asking, what can we expect from this product?

I think to truly have insight into any product announcement, you have to ask a few other questions first:

- Is this what they set out to build?

- What are their future plans?

If you answer these questions, you can begin to answer the larger question of what to expect.

As someone who builds his business around platforms like Apple’s, my mind has been guessing and evaluating the possibilities for quite some time. With the new announcement, we now have a tangible picture of what they’ve done for the Apple TV and can draw some conclusions about where it’s going. Since I have pondered the what ifs a lot longer than most, I’m going to give you a little dive into how my brain has deconstructed it all and you can take from that what you will. A forewarning, I think about this a lot. I have a lot to say, but I think it will help you draw valuable insight into Apple’s new product and how they operate.

So let’s jump into our first question, “Is this what Apple set out to build?”. It is important to note, products typically don’t pop up overnight. They are planned well in advance and evolve over time. Inside discoveries and outside market forces tend to shape the direction. I like to think that Apple has product visions that stretch over multiple years rather than operating on quarterly reactions. Other forces effect it, but I believe more than other companies they have very strategic efforts that they carry out. Let’s take a trip back in Apple’s timeline to see what I mean.

Here are some key dates that we can use to potentially understand Apple’s TV initiatives.

Each of the dates above play a role in my mind to the evolution of the product. Some were initial inspirations and others were planned initiatives around the TV itself. If you look at what happened on each of the dates and focus on how they relate to video, the timeline starts to tell a story.

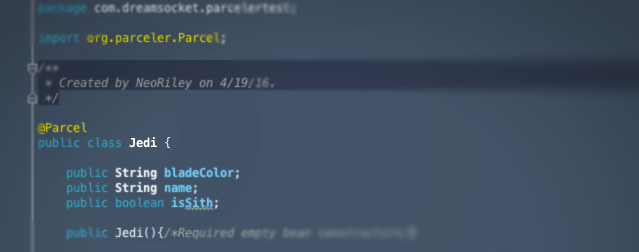

The iPod and iTunes

There have been many influences over the years, but what can we point back to as the original spark? I’m going to pick the iPod and iTunes. It is common knowledge that iTunes and the iPod changed Apple and the entire music industry forever. Apple hit a sector of the entertainment industry that was falling apart and desperately needed a solution. Apple offered it, and in turn became the the main broker of music entertainment. This introduced a completely new outlet to their business, selling content. This obviously opened their eyes and filled their pocket books. I don’t think many people saw it coming, not even them. It was the beginning of a shift in their thinking.

Media Centers and the Mac Mini

Over the course of the next few years, Apple really prospered. Obviously anyone at the helm of a ship like that starts asking what other things can we apply this winning formula to. It seems like a no brainer, TV shows and movies right? They are consumable media just like music. At the time of iTunes, video content wasn’t very digital yet, but people were exploring its potential. In 2002 Microsoft introduced a Media Center Edition of XP that was a play into the space. Their OS sought to provide access to movies, TV, pictures, music and more in a “leanback” experience. This really got the conversation going about a computer for the living room that brought all media in digitally.

Fast-foward to January 22, 2005, and Apple introduces the Mac mini, an extremely compact version of their desktop computer. At the time, the market was saturated with monster computer towers that took up enormous amounts of space and had 50 different components thrown into them. This was a great move by Apple on many levels. It started to remove a lot of excess in desktops, reducing both cost and size. At the time, people had become accustomed to desktop beasts they were forced to live with, so it didn’t innovate that market overnight. It was good for it, but it wasn’t a game changer. However, if you look at it from a different perspective you can see a bit more genius in it. As people began exploring “media centers” in their living rooms, the giant towers weren’t accepted there. Consumers weren’t going to be OK with putting some massive brick beside their TV. The Mac mini was the perfect form factor for that room. If you compare it to the modern Apple TV, the two look almost the exactly same. I’m sure a lot of the components, tooling, and manufacturing capabilities used to create the mini were able to be leveraged to produce the TV.

At this time, I believe Apple had a vision of getting into the living room, but they weren’t sure what it was going to look like yet. They knew video content still hadn’t made a huge shift to digital, but it was gaining a lot more traction than ever before. Flash video had been introduced in 2003 and was updated in 2005 to actually be more viable. People were starting to explore the potential of this technology, both large media companies and startups. The biggest player to the movement, YouTube, went live on February 14, of that same year. This time period provided the biggest spark for digital video movement. It started to make it more viable.

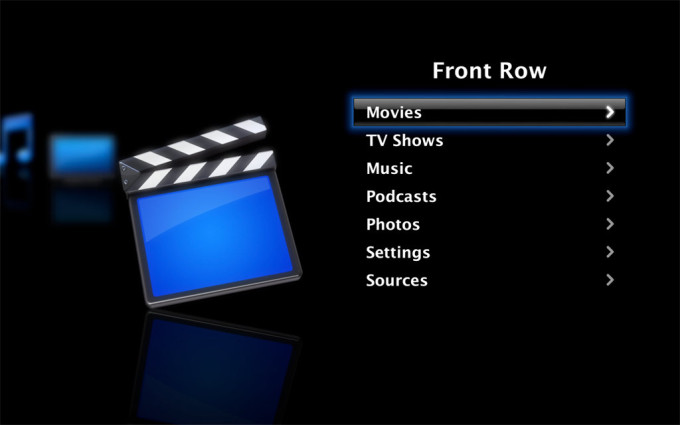

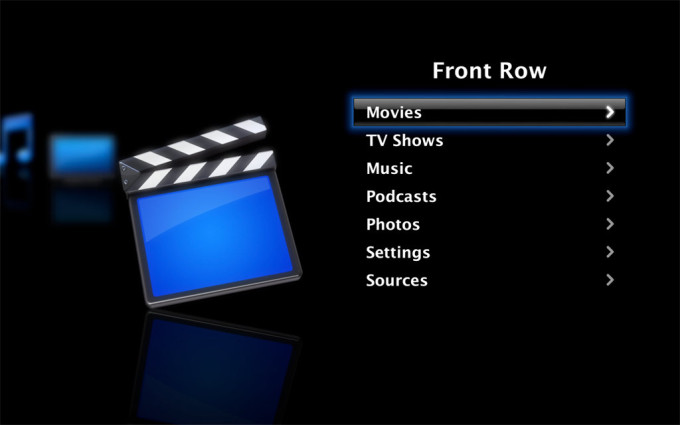

Front Row and Purchasable video content

Apple was reading all the signs, and the mini provided them a vessel to explore with early adopters. They were playing it safe, because they still needed to determine consumer behaviors around the space before they jumped into it. It was still evolving. Unlike Microsoft and Google who are quick to get their ideas out, Apple tries to make sure they don’t make a play at something until they feel the play is solid. They do explore, but are calculated in their explorations. Jumping to October of that same year, you can see this. Apple announced new software Front Row and introduced TV show purchases to iTunes. For those not aware of what Front Row was, it could be seen as Apple’s first “media center”. When announced, their computers started shipping with small remotes. When used with the Front Row software, it turned your desktop computer into a leanback experience. You were able to browse photos, listen to music, and watch videos. The introduction of TV show purchases to iTunes, along with movies the next year in September of 2006, marked their desire to bring the same model they had applied to music to video. Even if it was an exploration play, it showed Apple had interest in the living room.

Apple TV (v1)

The original Front Row, remote, and iTunes purchases probably got very small usage, but I believe this taught Apple a lot. It illustrated, that the living room was a different experience. Consumers weren’t going to just move their desktops into that room. They weren’t going to spend a fortune for that experience. They didn’t need a powerful beast to just consume things. They just needed the simple things that front row provided on a less expensive device. It could be very focused and lean. Fine tuning their software and hardware, on January 9, 2007, Apple brought out their answer, the original Apple TV. With its launch and for years to come, they also made a very strategic note that it was a pet project for them, an experiment. They knew the market wasn’t completely there yet and they didn’t want the product to be viewed as a failure. This type of marketing definitely buffered them from getting criticism over the years as their other products had more stellar sucess stories. I also truly believe that they understood how complex the TV and movie business was. Unlike the music industry, the TV and movie business wasn’t in dire distress. The relationships and how it was run made for a huge hurdle as well. It has many layers, which I’ve mentioned before. Apple wasn’t going to be able to take that market as easily as they had with music. Knowing it was eventually going to happen, their approach was genius.

The iPhone distraction

The next 8 years, I consider years full of learning and distraction. The distraction came from Apple’s second modern blockbuster, the iPhone released on June 29, 2007. In a world full of people looking at their smartphones every 5 minutes, it is hard to imagine a world that existed without them. Being in the industry, I remember the promise for years of how insane the market could be. The number of people who owned phones vs computers, the rate at which they acquired new devices, everything showed promise. It wasn’t until the delivery of the iPhone that anything ever delivered on it.

Personally, my guess is Jobs was more interested in the space of personal computing than small handhelds. He was a creator. I think what we saw the iPhone become, he originally had planned for the laptop/tablet. In a way the phone was a distraction for him from creating a device to create. It was a device to communicate and consume. I tend to wonder if it went against his grain a bit, given I feel he desired one on one communication.

If you back up to September 7, 2005, 2 years prior to the iPhone launch, Apple announced an attempt with Motorola to put iTunes on one of their phones. It made total sense, given the closeness in form factor and the ability to have the iPod morph into a connected device. I see the Motorola venture as another experiment. Apple was looking for what that experience might be. They knew the potential for smartphones, but had to figure out how to approach the industry first. I think the experiment showed them how underserved the market was. It probably also ate at Jobs up that it was so bad.

The App Store

A year passed after the launch of the phone, and in July 2008, Apple announced the App Store on the phone. This was a monumental game changer for computers in many ways. Up to this point, the software industry was either shrink wrap or purchased direct from the creator digitally. The phone proposed interesting issues of “how do you get apps on it?” and “how do you ensure they don’t wreak havoc on the device?” Since iTunes and music were the original motivating factors to move to the phone, Apple took a play from its own book. They created the App Store to be iTunes for apps. Not only were they able to sandbox the things being deployed to the phone, they became the curators and brokers of what went on the device. It wasn’t a new concept, but it was the first time someone was able to pull it off. With the ability to have an instant market for what you create, this brought developers in swarms to the platform. It transformed the device into something entirely new. It became a brain and game console in your pocket.

Even more so than the iPod, I don’t know that Apple saw how big this would become. Their company focus shifted again. The phone was their golden goose and would hold the company’s main focus for years. Everything that hadn’t been fully developed got pushed down in priority.

iPad

The one thing that didn’t lose priority was the device that I believe Jobs originally wanted to create, the iPad. In April 3, 2010, the first version was released. In watching Jobs present it, I feel that it was very evident, this was one device he had envisioned for a long time. In many ways, the iPad was just a big iPhone. Some people mocked it as such and couldn’t see the need for it. What they weren’t seeing was that it lended its self to consuming content that wasn’t as suitable for the phone, from both a connection and form factor perspective. One of those was video. If you are going to be in a place that has wifi, sitting instead of standing, would you rather watch a movie on iPhone or iPad? The larger form factor also gives you more room for control. It filled a gap that the phone and laptops didn’t.

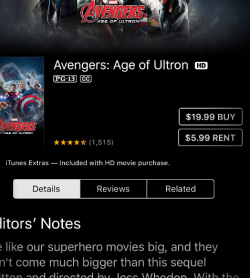

Purchase vs Rental

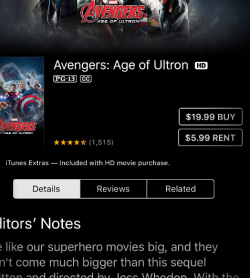

As I mentioned, while the iPhone revolution was going on, Apple was still learning. The TV took a backseat, but I think it needed to. The market was still too complicated. Apple originally approached it with a purchasing model, applying the same logic to video content as they had to music. The problem with that approach was people don’t consume TV shows and movies in the same way they do music. Typically unless you have kids and you just throw a movie in and hit repeat, you aren’t going to watch the same thing over and over again. With music, you do. Coming from a behavior of purchasing albums and having the desire to listen to the content over and over again, people were willing to buy. They wanted the ability to own their content and take it with them. The iPhone and apps like Spotify and Pandora have since changed this behavior, but coming off of CD sales and Napster, it was what people expected.

If you look at the video market, it was dominated by rentals, VHS and later DVDs. DVD sales existed, but it wasn’t the dominate choice. Apple later realized this and 3 years after introducing purchasing, announced rentals on January 15, 2008. The market for the TV was still very niche though.

AirPlay and one device to rule them all

With the massive success of the iPhone and iPad, I bet Apple’s thinking shifted. Apple started asking the question, what if the iPhone is the center of everything? If I have access to all my content thru that device and I carry it with me everywhere, why not make it be the brain? September 1, 2010, Apple started with another experiment: AirPlay. AirPlay is the ability to mirror or broadcast content from one device (iPhone, iPad, or Mac computer) to another device like the Apple TV. This is a powerful concept and requires that you really only have one smart device and anything it broadcasts to merely needs to be a receiver. Bill Gates outline it in his book “The Road Ahead”, written in 1995. I explain this point because many people don’t know what it is or that it even exists. That is part of its issue, it requires multiple unified elements and an understanding of how they all tie together.

I’ve longed for this concept to become true. In presentations I gave back in 2005, I talked about the iPod becoming just that. Even back then, it was evident that it could become a handheld super computer. Google made a go at this too with Chromecast a simple TV receiver/dongle priced under 40 dollars that introduced on July 24, 2013. More recently there have been attempts on Android and Microsoft to take the extra step and make the device change its UI based on the context of the receiver. Microsoft labels it Continuum. It will come, but the complexity of that market will take time to evolve.

The complexity of the TV market

AirPlay illustrated Apple was trying to look at different approaches to the TV market. In addition, I think they were trying to figure out a much harder issue, how to enter it from a content perspective. Apple tried both purchase and rental models with content. Traditional media companies were fine with this approach since it was more of a secondary market. What I think Apple came to realize is that consumption behavior for video is all about first run and being the original distributor. This made their job much harder.

Like I mentioned in the Apple TV (v1) section, there have been huge barriers if you were going after the premium content tier (movies, tv shows). We know from 2014 FCC Filings by Comcast and Time Warner that Apple had approach them about jointly developing a set-top box. The relationships with content providers and MSOs (AKA multiple-system operators like Comcast, DirectTV, etc) is so hard to break, I believe Apple realized that a strong play would be to work directly with the MSOs. The problem with that is that the MSOs knew they still had a good thing and saw what happened to the music industry. I think they may have strung Apple along. Why let them in if you are doing well and your wall is high?

TV starts to break down

If you read my article “What is happening to the entertainment industry?” , you’ll see that the walls that were once so high have broken down. It didn’t happen overnight like the music industry, but the entertainment industry is slowly entering into an initial state of distress. One of the largest catalyst to this movement is Netflix. They originally copied the cable companies subscription model and applied it to DVDs, then transitioned that success to online distribution in 2007. One of the keys was convenience and paying a small monthly fee. Consumers were used to subscriptions, it was expected and welcomed. The cost played a huge role too. In comparison to DVD rentals or cable subscriptions it was more approachable. Netflix could arguably be labeled the first online cable company and you can even watch Netflix for other countries using VPN services as vpn netflix gratis which are great for this.

Whats the cheapest way to get Netflix on TV?

As Netflix became more popular online, people began to have the desire to watch it on their TV. This fueled what I like to call the “how can I get Netflix on my TV” phase. Netflix was incredibly smart about trying to get it on anything and everything that could land them on the TV and in your living room. Whether it was a smart TV, Xbox, Apple TV, or some random device like a Boxee the public started to seek out the cheapest way to get Netflix on their TV.

In the spring of 2014, both Google and Amazon introduced versions of their OS for the TV. However, I think the real tide turned in November 19, 2014 when Amazon introduced a small dongle for the TV known as the Fire Stick that ran their TV based OS. The kicker was they sold it for only $19 originally for Prime members. From a cost perspective, it made this a no brainer. It was so cheap, why would you not buy one? What was surprising was the device and OS were actually nice. It entered in for most people as the cheap way to get Netflix (and Prime) on the TV, but the man behind the curtain knew it had an app store and was running Android. If you jump forward to today, a lot of content companies like HBO, Showtime and others have applications running on the device.

Is the new Apple TV what Apple wanted?

Finally, let’s talk about the new Apple TV (v2) that was announced on September 9, 2015. I think we can look at all of the points mentioned in this article and see how this product evolved. We know Apple has had an interest in the TV space all along, they knew the promise of it. I think they have been cautious of how they approach it both from a device and content perspective. They experimented with different approaches, but I think very early on realized they need a relationship with content providers in the same way that MSOs had. I believe they also realized that because the industry was relatively stable that it was going to be hard to accomplish that. Their attempts to get an insider advantage by working directly with MSOs failed. However, the industry has also taken a significant turn. Netflix and Amazon have started to illustrate there are approaches that work.

Another key point that we haven’t discussed is games. Games have been a staple of the living room since the days of the Atari 2600. With the iPhone, Apple owns the handheld gaming market. They have instant distribution. Why would they not take on consoles like the Wii? I suspect this was one of the other pushing points for the Apple TV.

I feel Apple really wants to be a distributor like the cable company. From a revenue perspective, it is a market they aren’t capturing like they could. I think they’ve always wanted to be there. How they approached it has changed and evolved, but their underlying goal has been consistent.

With the TV market shifting how it has, other devices seeing success, Apple’s desire to compete with consoles, and most importantly consumer awareness and desire to bring interactivity to the TV experience laid out a perfect time for them to make a play.

The product isn’t exactly what they wanted but it holds to the essence of what they’ve been striving for. Time will tell, but I believe it is missing a big key component they desire: a subscription/distribution outlet.

What are Apple’s plans?

So, if we consider that Apple’s original plans are incomplete, do we think they are still going after them? Absolutely.

Apple does hardware and OS level software extremely well, but they tend to trail a bit behind companies like Google or Amazon who excel at cloud based software. Netflix’s success has come from their cloud based subscription/distribution platform for video content. To compete and do well in this market, you have to have that. On May 28, 2014, Apple acquired Beats Music which did just that. For those who only see things at a surface level, one might expect the acquisition was done in order to get their line of physical headphone products. I think that was just an added bonus. In buying Beats, they got multiple elements that could play critical roles in their business. The most important one was the subscription service. We have already seen this take form with Apple Music. Their once dominant position in the music industry has faded with Pandora and Spotify. The service helps them address that AND it helps them with a future setup for TV. They will have an app just like Netflix or their own Music app that is a subscription service. My guess is they are still trying to work thru content relationships and they want it to be done right. In addition, having the device in the market will help drive discussions around those relationships.

Talk to me like “Her”

You may be asking, what about the software on the device itself? Is that what Apple intended. For years I’ve felt like one of the hardest pieces to tackle with the TV is the interaction model with it. Directional based navigation controls, ugh! Apple stayed the course there with the small addition of a swipe face for a little more control. Why do you think that is? Apple showed you why, it is voice. They understand that the living room interaction is limited (outside of games). Really you want to just find and consume something. The goal isn’t interaction as much as it is getting to the content and consuming it with as little effort as possible.

Siri has become more and more powerful over the years. It is no where near the level that we see in the movie Her, but that reality is coming. Amazon, Google, Microsoft and Apple all understand that, for consuming information and media, the best interface is non tangible interface. Think about how much easier and efficient a natural language conversation can be. What’s the weather today? What was the score for last night’s Braves game? Can you find that movie with Robert Redford about baseball? This is Apple’s true play for the future of consumption based interaction with computers. The TV environment lends itself better than any other to a voice interaction model.

The problem with basing interaction on voice is exposing content in applications that by their very nature hide it. How do you say I want to watch Orange is the New Black and the only place I can consume it is in the Netflix app. Unlike the web that was indexed by Google and liberated with its search, mobile apps have been silos. Google and Apple have both approached this problem recently with their App Indexing and Universal Link solutions. We finally have indexing of applictions. Just how search transformed the web, this level of content awareness will take OSs to an entirely new level.

How important are applications?

If you paid attention to the keynote and how content was structured within the OS, something also stuck out. Not only was Apple providing an easy way to jump into content from search, they curated the results in a similar fashion to how Google does on the web. To see what I mean, do a search for Big Bang Theory. Rather than simply list out various apps that might provide the show, they created a buffer page with show details too. If you are using voice as your interaction model and your goal is to just consume content, applications start to become less important to an extent. It should be very interesting to see what impact this has.

The Apple Gaming console

My particular interest with the TV obviously is how it relates to media. As I mentioned the other driver for Apple is games. It is the highest revenue stream they have in the App Store. Games and the living room have been like milk and cookies, a perfect match. It boggles my mind that Amazon and Google haven’t put more emphasis on growing this on their TV based platforms. I know it is in their sights, but it seems they haven’t put a large focus on it yet. Obviously the big draw for them has been the cheapest way to get Netflix on the TV, but man theres a huge opportunity there. Apple consumers will expect it there and developers will see the potential. It is going to happen. If you jump back to Apple’s WWDC conference in the spring, you will see they have big plans. As they were showing off new games being developed in Metal (their programming answer for taking game graphics and processing to the next level), it was obvious the level of games being shown were on a level that didn’t make sense for the casual expectations of handhelds. Apple is looking to take on the Xbox and Playstation.

Should we be excited?

Apple has always been great about entering a market at the right time and using their marketing and approach to transform it. When Apple makes a play, the general public accepts it more than any other companies attempts, because they perceive it as a ground breaking and are made more aware of it thru stellar marketing. They aren’t doing that much more than the others out there, but the public’s attention to it and the developers willingness to get behind it will open this market up.

I have a feeling that it probably scares the MSOs. I would not doubt if content companies put their TV Everywhere apps on the platform, you don’t see some choice providers missing from the list. Once the general public starts to understand that they can have the same ondemand experience on their TV as they have had with their other devices, it changes everything. It exists now, but for the mass public it’s not there. That perception shift will make people question cable boxes. Change is inevitable though.

I personally am excited. I’m not an Apple Fanboy, but a fan of progression and the ability to create great things. Apple tends to open up doors and that makes me happy. I’ve been waiting for a long time for the TV to change. In the background, it already has. It has died little by little, but there is hope.

Just like Apple, at Dreamsocket we’ve experimented thru the years. In November 2006, we worked with Playstation prior to the PS3 launch to dream of what a TV and game experience might look like merged. When asked why the project was important to me, I used the opportunity to try to throw my ideas at them. I noted that for me as a developer, I wanted Sony to create a rapid development model that could allow anyone to publish to their device. I also mentioned that there was no need for discs, digital distribution over the internet direct to the device made a lot more sense. I just wanted to see the opportunity to be in the living room. I’ve always wanted it. There is something about your having your app on a big screen.

Over the course of the next 9 years, we took other projects here and there to test the waters. When the Google TV came out, we built apps for it. Getting an opportunity to help create the actual interface for a gaming device itself, we jumped on it. Exploring how a phone could interact with TV, we made a play at it.

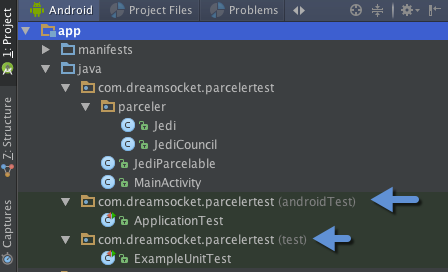

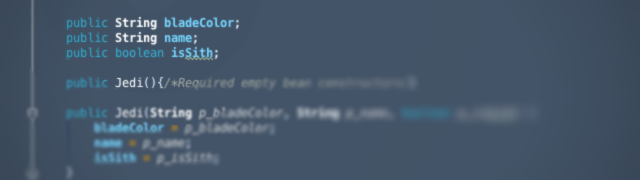

TV has been waiting to be modernized for years. Knowing that the new Apple TV was coming out, we developed new applications for some of our media clients on the Android and Amazon Fire TV devices. They were actually strategically targeted to come out the week after the Apple announcement and a few days before the Amazon one. They haven’t released yet, but they are coming down the pipe.

Why did we do this? Knowing that Apple has gone from experimentation to going after the TV market, I knew that unlike anyone else, they have the capability to be a catalyst for the space. I’ve hated to see TV living in legacy for years. Even though the living room is less dominate now, it still is the first and final frontier for entertainment. Most importantly developing for the TV is fun.

If you managed to read all of this, congrats and thank you ;). I could go into much deeper levels on many different points, missed a lot of things that I also feel are relevant, but it gives you a peek into my brain. That peek may be right or wrong on things, but it shows the importance of trying to understand the big picture. If you can do that, you can see how things emerge and you can spot opportunity. I’m excited about the Apple TV.

Subscribe

Subscribe Follow us on Twitter

Follow us on Twitter